dSPACE brings OMNIVISION camera models into AURELION to boost ADAS and autonomous driving simulation

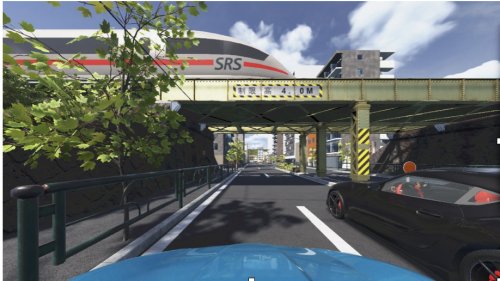

dSPACE, a global leader in simulation and validation solutions for automotive development, has announced a new collaboration with OMNIVISION, a well-known developer of CMOS image sensor technologies, aimed at strengthening the way advanced driver assistance systems (ADAS) and autonomous driving (AD) functions are developed and validated. Under this partnership, realistic models of OMNIVISION’s automotive camera sensors are being integrated into dSPACE’s AURELION platform, a physics-based simulation environment used for virtual testing of complex vehicle systems. The move addresses a critical challenge faced by OEMs and suppliers today: ensuring perception systems behave reliably across countless real-world scenarios before vehicles ever reach public roads.

Cameras play a central role in modern ADAS and autonomous vehicles, supporting key functions such as object and pedestrian detection, lane recognition, traffic sign reading, and obstacle avoidance. However, validating camera-based perception systems using only physical road tests is costly, time-consuming, and often insufficient to cover rare or dangerous edge cases. By embedding OMNIVISION’s image sensor models directly into AURELION, developers can now simulate how real camera hardware behaves under varying lighting conditions, weather scenarios, road geometries, and traffic situations. This allows engineers to test perception algorithms in a virtual environment that closely mirrors real-world performance, helping them detect weaknesses and optimize system behavior much earlier in the development cycle.

The enhanced sensor realism within AURELION enables developers to recreate complex driving scenarios with a high degree of accuracy, including challenging conditions such as low light, glare, shadows, and adverse weather. This level of detail is crucial for validating perception systems that must operate safely and consistently in unpredictable environments. With these simulations, teams can evaluate robustness and reliability long before prototype vehicles are built, reducing dependence on physical testing and significantly accelerating development timelines. The approach also helps lower overall costs by identifying potential issues early, when changes are easier and less expensive to implement.

From dSPACE’s perspective, the integration expands the capabilities of its sensor simulation portfolio and strengthens its ecosystem of technology partners. By working closely with a leading semiconductor and image sensor company, dSPACE can offer customers more accurate testing options that reflect the behavior of production-ready camera hardware. This, in turn, supports a smoother transition from virtual validation to real-world deployment. For automotive manufacturers and Tier-1 suppliers, the result is a more efficient path from concept to validated ADAS and autonomous driving functions, with fewer late-stage surprises.

For OMNIVISION, the collaboration provides an opportunity to place its high-performance image sensors into a sophisticated virtual testing environment that mirrors how these sensors are used in real vehicles. By making sensor behavior available in simulation, OMNIVISION enables developers to better understand how perception systems respond to real-world challenges and to fine-tune algorithms accordingly. This helps ensure that camera-based perception solutions deliver the accuracy, safety, and reliability demanded by next-generation vehicles.

Overall, the integration of OMNIVISION camera models into dSPACE’s AURELION platform reflects a broader industry shift toward simulation-driven development. As vehicles become increasingly software-defined and reliant on complex sensor fusion, realistic virtual testing is becoming essential rather than optional. Partnerships like this one highlight how collaboration across the automotive technology ecosystem can shorten development cycles, improve system quality, and support the delivery of safer, more reliable ADAS and autonomous driving solutions to the market.